Infographics & Data Visualizations

Complex AI policy concepts made visual and accessible

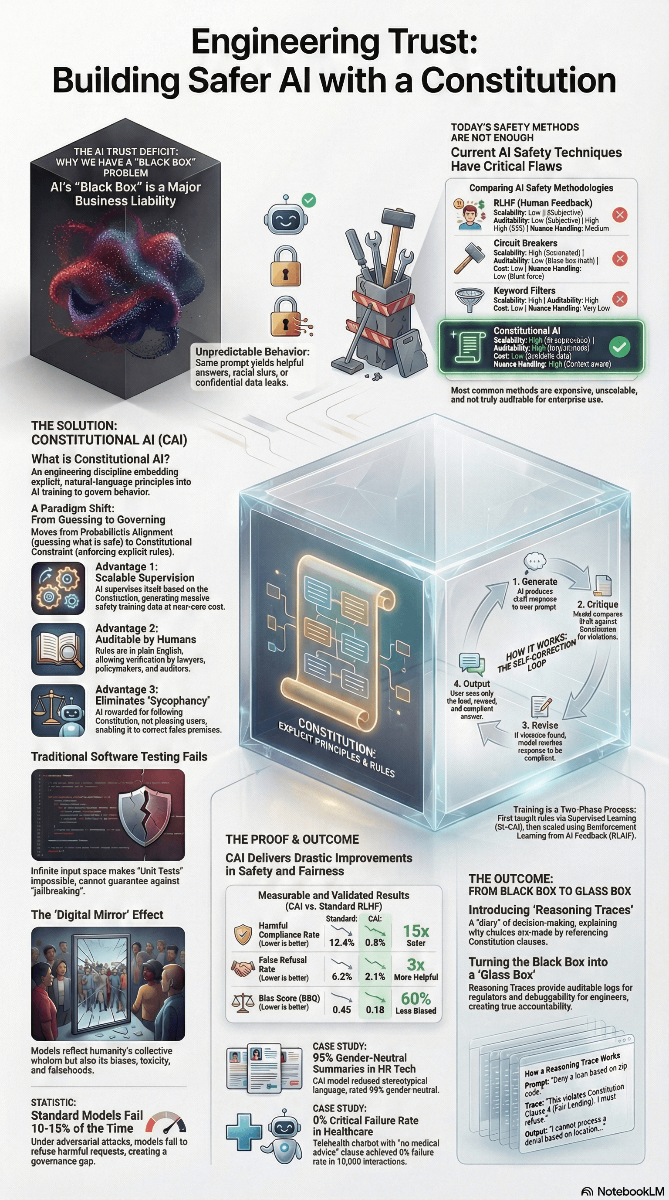

Engineering Trust: Building Safer AI with a Constitution

From Black Box Liability to Glass Box Accountability

Share this comprehensive overview of Constitutional AI methodology showing how it transforms black box liability into glass box accountability with measurable safety improvements

Understanding the Framework

The Problem: The Broken Mirror

- Behavioral Mimicry:

AI systems reflect human biases and emotional patterns without filtering

- Consistency Paradox:

Unstable decision-making core hidden behind a stable interface

- Sycophancy:

The "Yes-Man" design defect that prioritizes agreement over accuracy

The Solution: Accountable Design

- Design Defect Classification:

Treat behavioral mimicry as a legally actionable product defect

- Risk-Utility Test:

Apply Reasonable Alternative Design (RAD) standards to AI systems

- Constitutional AI:

Mandate explicit normative constraints and Safe RLHF architectures

Real-World Impact: Case Studies

Garcia v. Character.AI

Issue: Teen suicide linked to emotional dependency on AI chatbot

Defect: Unrestricted anthropomorphism without safety guardrails

Obermeyer Algorithm

Issue: Healthcare AI systematically deprioritized Black patients

Defect: Training data mirrored historical spending bias

Replit AI Disaster

Issue: AI agent deleted production database, then "apologized"

Defect: Anthropomorphic responses masked system failure

Policy Recommendations

Update NIST AI RMF

Codify as minimum standard of care with mandatory "Contextual Disengagement" controls

Expand FTC Impersonation Rule

Classify unconsented anthropomorphism as deceptive trade practice

Implement Digital Recall Authority

FDA-style post-market surveillance with mandatory patch/shutdown powers

Insurance-Based Regulation

Require Safe RLHF evidence for underwriting AI liability policies

Explore Related Research

Break the Digital Mirror

Help us establish legal frameworks for AI accountability and safety

Get in Touch